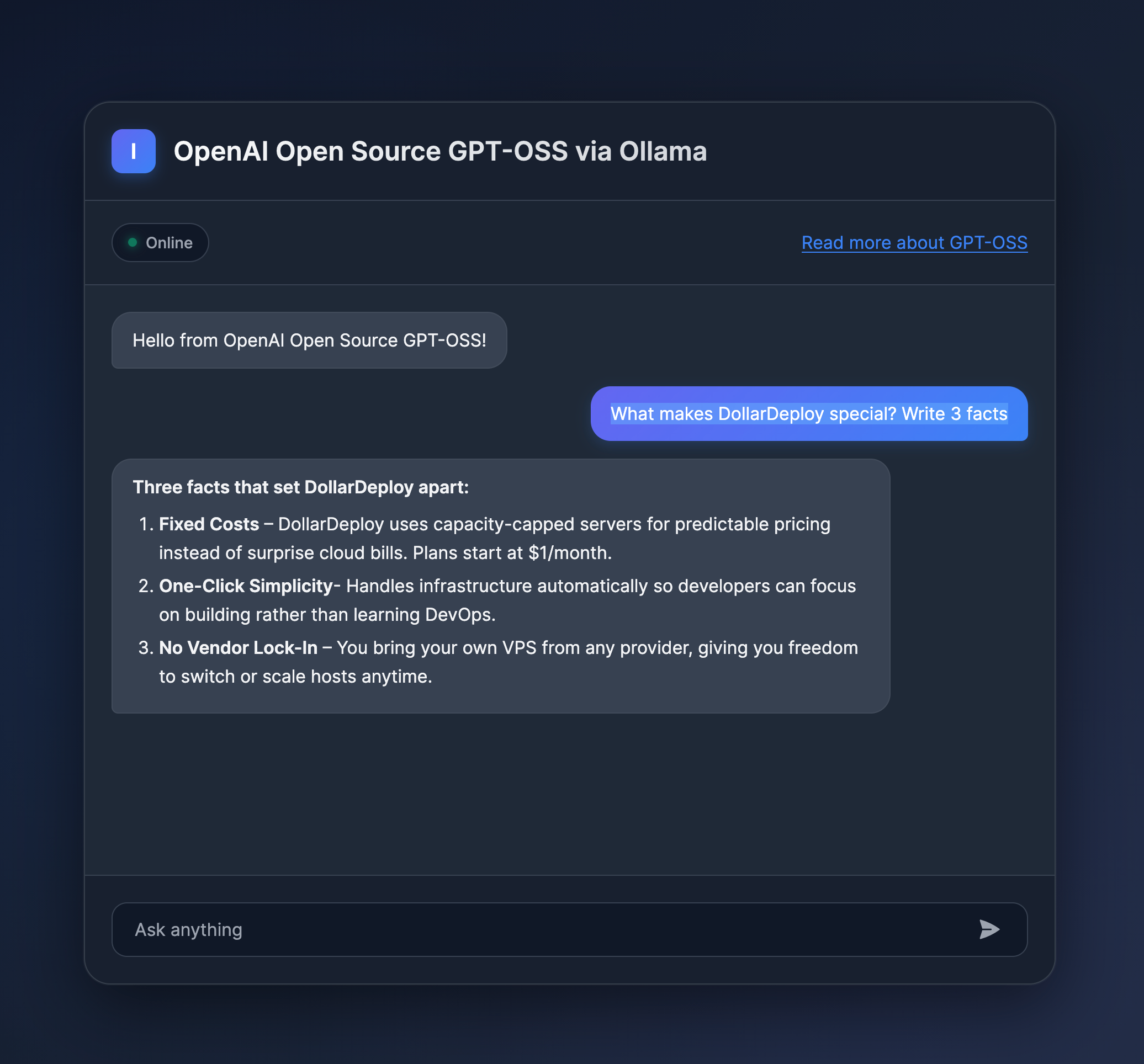

OpenAI recently released GPT OSS, their first open-weight models designed for powerful reasoning and agentic tasks. With the gpt-oss-120b and gpt-oss-20b models now available under the permissive Apache 2.0 license, you can run your own production-grade AI without vendor lock-in and privacy.

DollarDeploy makes deploying LLM models straightforward. In just a few clicks, you can have your own reasoning AI running on your server, with full control over costs, and with the full privacy of self-hosting.

What is GPT OSS?

GPT OSS is OpenAI's open-weight model family that brings professional-grade reasoning capabilities to self-hosted environments. These models use a mixture-of-experts (MoE) architecture with 4-bit quantization (MXFP4), enabling fast inference while keeping resource usage manageable.

The series includes two models:

- gpt-oss-120b — Production-ready model with 117B total parameters (5.1B active) that fits on a single A100/H100 80GB GPU

- gpt-oss-20b — Lighter model with 21B total parameters (3.6B active) for lower latency and specialized use cases

Both models feature configurable reasoning effort (low, medium, high) and provide full access to their chain-of-thought reasoning process, making debugging and validation easier.

Why Self-Host LLM Models?

Hard-Capped Pricing

When you self-host on your own server, you pay a fixed monthly rate regardless of usage. No surprise bills from viral traffic, AI bots scraping your endpoints, or accidentally leaving a service running overnight.

A dedicated GPU server gives you unlimited inference at a predictable monthly cost.

Full Control and Privacy

Your data stays on your infrastructure. No external API calls means:

- Complete data privacy and compliance control

- No latency from external API calls

- No rate limiting or throttling

- Ability to fine-tune models on your proprietary data

Production-Ready Performance

Unlike API-based solutions where you share resources with other users, a dedicated server means:

- Consistent, predictable latency

- No cold starts or initialization delays

- Full control over model parameters and reasoning effort

- Ability to run multiple models simultaneously

What Server Do You Need from Verda?

Verda Cloud (formerly DataCrunch), one of DollarDeploy's integrated providers, offers GPU servers perfect for running GPT OSS models at competitive prices.

Running LLM model gpt-oss-120b

The 120B model requires a server with at least one NVIDIA H100 80GB GPU. Verda Cloud offers several configurations, including A100 and H100. For example, you can use the following configuration, which will allow you comfortably run the models.

- 1H100 x1 80GB VRAM 1.99€/hr

Running LLM model gpt-oss-20b

This one you can run with A100 or A6000, which can be done at 0.5€/hour.

Cost Comparison: Self-Hosting vs. API

Let's compare the monthly costs for running a moderately active AI application:

Scenario: Processing 10M tokens per day (300M tokens/month)

| Provider | Setup | Monthly Cost | Notes |

|---|---|---|---|

| API Provider | GPT-4 class API | $22,500+ | At $75/1M tokens (input only) |

| Verda (DataCrunch) | 1x H100 80GB | ~$1,440-2,160 | 24/7 dedicated server |

| Verda (DataCrunch) | 1x A100 80GB | ~$972-1,440 | For gpt-oss-20b |

Savings: Up to 93% cost reduction at moderate to high usage levels.

The break-even point comes quickly. If you're processing more than 20-30M tokens per month, self-hosting becomes significantly cheaper.

Getting Started with DollarDeploy

DollarDeploy makes deploying GPT OSS models as simple as deploying a Next.js app. Our template handles all the complexity:

- One-Click Deployment — Select the GPT OSS template from DollarDeploy

- Server Integration — Connect your Verda Cloud (formerly DataCrunch) account and create a new GPU server. Start here.

- Automatic Setup — We handle the installation of inference engines (vLLM, Ollama, or Transformers)

- HTTPS Configuration — Your API endpoint is automatically secured

- Monitoring — Built-in monitoring for GPU usage, memory, and request throughput

The template automatically configures:

- The harmony response format (required for GPT OSS models)

- Optimal inference settings based on your chosen reasoning level

- Load balancing for multi-GPU setups

- Proper memory management and caching

Flexible Inference Options

Our infrastructure supports multiple inference backends:

vLLM (Recommended for production)

- Highest throughput and lowest latency

- Continuous batching for efficient multi-user serving

- PagedAttention for optimized memory usage

Ollama (Best for simplicity)

- Simple setup with one command

- Great for development and testing

- Easy model management

Transformers (Most flexible)

- Direct HuggingFace integration

- Full control over model parameters

- Best for research and experimentation

Verda Cloud: Why We Recommend Them

Verda Cloud (formerly DataCrunch) is an European GPU cloud provider that offers several advantages:

- Modern GPUs: B200, B300 and H200 available via self-serve and with per-hour / spot commitments

- Competitive Pricing: H100 GPUs starting at ~$1.99-3.35/hour

- Renewable Energy: 100% renewable energy for all GPU instances

- ISO-Certified: Enterprise-grade security and compliance

- Easy Integration: Seamless connection with DollarDeploy

- Flexible Billing: Hourly or monthly payment options

- High-Speed Networking: NVLink and InfiniBand for multi-GPU setups

Verda Cloud's infrastructure is specifically designed for AI workloads, with NVIDIA-certified configurations that guarantee optimal performance for models like GPT OSS.

Getting Started Today

Ready to deploy your own GPT OSS model? Here's how to get started with DollarDeploy:

- Sign up for DollarDeploy at dollardeploy.com

- Register at Verda Cloud (DataCrunch) and add money to your account Start here.

- Create Verda Cloud API token at Keys => Cloud API Credentials

- In DollarDeploy add integration details in Settings => Integrations.

- Deploy the GPT OSS template with one click

- Start inferencing through your secure HTTPS endpoint

Conclusion

Self-hosting GPT OSS models with DollarDeploy and Verda Cloud gives you production-grade AI capabilities at a fraction of API costs. With fixed monthly pricing, no surprise bills, and complete control over your infrastructure, you can build AI applications that scale without breaking the bank.

Whether you're running a startup, building internal tools, or conducting research, the combination of open-weight models and affordable GPU infrastructure makes advanced AI accessible to everyone.

Start at $1.99/hour for H100 GPUs with Verda Cloud through DollarDeploy. No hidden fees, no surprise bills—just powerful AI infrastructure you control.